-

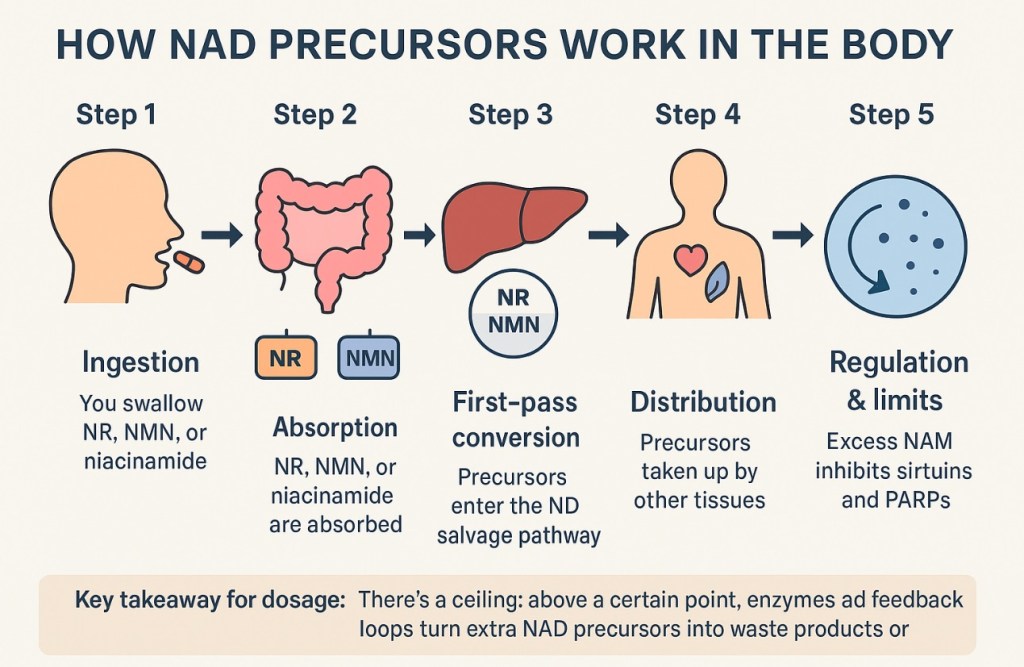

How NAD Precursors Work in the Body or what do I need to understand before taking a NAD supplement

Generated by ChatGPT (GPT-5 Thinking mini, OpenAI). Answer obtained August 10, 2025. Note: AI-generated content may contain inaccuracies; consult primary literature for clinical decisions.

Step 1 — Ingestion

• You swallow NR, NMN, or niacinamide.

• NAD⁺ itself is mostly broken down in the gut into smaller units before absorption.

Step 2 — Absorption

• NR: Taken up in the small intestine by nucleoside transporters.

• NMN: Likely broken down into NR before uptake in humans (still debated), though some evidence suggests a specific transporter exists in mice.

• Niacinamide: Readily absorbed via facilitated diffusion.

Step 3 — First-pass conversion

• In intestinal cells and liver, precursors enter the NAD salvage pathway:

• NR → NMN → NAD⁺

• NAM → NMN → NAD⁺

• Enzymes: NRK (nicotinamide riboside kinase) and NMNAT (nicotinamide mononucleotide adenylyltransferase) are key rate-limiting steps.

Step 4 — Distribution

• NAD⁺ made in the liver is not directly shipped out — instead, NAD precursors (like NAM) circulate and are taken up by other tissues to make their own NAD⁺.

Step 5 — Regulation & limits

• If intake is too high, excess NAM accumulates. This inhibits sirtuins and PARPs, counteracting benefits.

• Enzymes like CD38 constantly break down NAD⁺ — higher with age and inflammation, meaning older adults may “burn through” NAD⁺ faster.

• Cells balance NAD⁺ pools across the nucleus, cytosol, and mitochondria separately — boosting blood NAD⁺ doesn’t guarantee a big mitochondrial bump.

⸻

Key takeaway for dosage:

• There’s a ceiling: above a certain point, enzymes and feedback loops turn extra NAD precursors into waste products or inhibitors.

• For most healthy adults in studies, 300–500 mg/day NR or NMN moves NAD⁺ significantly; beyond 1 g/day, gains flatten and side effects (like flushing or digestive upset) can increase.

Prompt:

What do we know about the biology of NAD taken as a supplement. By biology is meant does oral ingestion really result in improving what we know the molecule does. Also biology is replete with examples of just the right amount being critical and that suggests dosage matters

Response

NAD biology is tricky, because the molecule is central to metabolism but also because taking it as a supplement doesn’t work as cleanly as people often assume. Let’s break it down carefully.

⸻

1. What NAD does in the body

• Core role: NAD⁺ (nicotinamide adenine dinucleotide) is a coenzyme that shuttles electrons in redox reactions — it’s essential for energy production (glycolysis, TCA cycle, oxidative phosphorylation).

• Other roles: It’s also a substrate for enzymes that regulate DNA repair (PARPs), gene expression (sirtuins), and cell signaling (CD38).

• Decline with age: Tissue NAD⁺ levels tend to drop with age and in certain diseases, which has spurred interest in supplements.

⸻

2. The problem with taking NAD⁺ directly

• Poor absorption: NAD⁺ itself is too large and charged to cross cell membranes efficiently and is rapidly broken down in the gut.

• Blood stability: Even if some NAD⁺ gets absorbed, blood enzymes degrade it quickly into smaller molecules before it reaches cells intact.

⸻

3. Why precursors are used instead

Most supplements aren’t pure NAD⁺ — they’re precursors that cells can convert into NAD⁺:

• Niacin (vitamin B3) — classic form, raises NAD⁺ but can cause flushing.

• Nicotinamide (NAM) — raises NAD⁺ but in high doses can inhibit sirtuins (feedback inhibition).

• Nicotinamide riboside (NR) — marketed for NAD⁺ boosting; shown to raise NAD⁺ in blood and some tissues in humans.

• Nicotinamide mononucleotide (NMN) — similar to NR, but one step closer to NAD⁺ in the biosynthetic pathway. Absorption route in humans is still debated.

⸻

4. What the evidence says about oral supplementation

• Human trials: Small clinical studies show NR and NMN can raise blood NAD⁺ by ~20–60% within days to weeks.

• Tissue effects: Less clear — increases in muscle NAD⁺ are modest in healthy young adults, but more noticeable in older adults or those with metabolic issues.

• Functional benefits: Early human data suggest possible improvements in some measures (insulin sensitivity, vascular function), but effects are modest and not always reproducible.

• Animal vs human: Mice show bigger and more consistent benefits (lifespan extension, improved mitochondrial function) than humans so far.

⸻

5. The dosage question — “just right” matters

Biology often follows a U-shaped curve:

• Too little NAD⁺ → impaired energy metabolism, DNA repair, resilience to stress.

• Too much NAD⁺ → potential issues:

• Sirtuin/PARP overactivation (could affect cell cycle or stress response).

• NAM accumulation from breakdown, which at high levels inhibits sirtuins.

• Possible support for growth of some cancer cells (since cancer often thrives on robust NAD⁺ metabolism).

• Most human trials use 250–1000 mg/day of NR or NMN and report it as safe for months to years — but long-term effects beyond that aren’t known.

⸻

6. Key uncertainties

• Optimal target NAD⁺ level for different ages and conditions isn’t known.

• Which tissues benefit most from supplementation — blood NAD⁺ isn’t always a good proxy for brain, heart, or muscle.

• Whether long-term high-dose use has subtle downsides, especially in those without NAD⁺ deficiency.

⸻

Bottom line

• Oral NAD⁺ itself doesn’t survive digestion well; precursors like NR and NMN do raise blood NAD⁺ in humans.

• There’s good biochemical evidence they work in principle, but the translation into large, lasting health gains in humans is still tentative.

• Dose likely matters a lot — both too little and too much could be counterproductive.

• At present, the strongest case for supplementation is in older adults or people with certain metabolic or mitochondrial disorders, not necessarily healthy young individuals.

-

Raja Ram Mohan Roy the father of the Indian Renaissance was born today, 22 May, in 1772

Today is the 251st birth anniversary of a non-conformist born in Bengal who studied Persian and Arabic along with Sanskrit to change and shape the intellectual life of India.

As an exercise in testing ChatGPT this is a piece that compares an article published about him in the Economic Times in 2018, his 246th birth anniversary with what ChatGPT provided in response to a prompt.

ChatGPT – Question / Prompt

Provide a life sketch of the Indian social reformer Raja Ram Mohan Roy in how he was a change agent

ChatGPT

Raja Ram Mohan Roy, an eminent Indian social reformer, played a significant role in transforming society during the early 19th century. He was a change agent in several areas, promoting progressive ideas and challenging traditional practices. Here’s a concise life sketch highlighting his contributions:

• Born in 1772, Raja Ram Mohan Roy emerged as a key figure in the Bengal Renaissance, advocating for modern education, scientific temper, and social reforms.

• He criticized social evils like Sati (the practice of widows self-immolating on their husbands’ funeral pyres) and worked towards its abolition. His efforts led to the passage of the Bengal Sati Regulation in 1829.

• Raja Ram Mohan Roy aimed to bridge the gap between different religious communities. He founded the Atmiya Sabha in 1814, a socio-religious organization that encouraged interfaith dialogue and promoted religious harmony.

• In 1828, he established the Brahmo Samaj, a reformist Hindu society advocating monotheism, eradication of idol worship, and emphasis on rationality and morality.

• Raja Ram Mohan Roy ardently supported women’s rights and education. He promoted female education and fought against practices like child marriage and polygamy.

• He played a pivotal role in the introduction of Western-style education in India. Roy advocated for the study of English, science, and modern subjects alongside traditional Indian knowledge systems.

• Raja Ram Mohan Roy was a vocal critic of social discrimination and advocated for the rights of marginalized communities. He condemned the caste system and worked towards its eradication.

• Roy actively engaged with British administrators, urging them to adopt liberal policies and press for Indian political representation.

• His writings and publications in Bengali, Hindi, and English spread progressive ideas, making him a prominent voice for social reform in colonial India.

Raja Ram Mohan Roy’s relentless efforts as a change agent brought about a transformational impact on Indian society, leaving a lasting legacy in the realms of social, religious, and educational reforms.

Excerpts from the article in Economic Times ( for comparison to ChatGPT)

Father of the Indian Renaissance

Raja Ram Mohan Roy was a non-conformist to many a tradition he was born into on this day in 1772, in Radhanagar village in Murshidabad district of West Bengal.

Education and interests

He studied Persian and Arabic along with Sanskrit, which influenced his thinking about God. He read Upanishads, Vedas and the Quran and translated a lot of the scriptures into English.

After his father’s death in 1803 he moved to Murshidabad, where he published his first book Tuhfat-ul-Muwahhidin (A Gift to Monotheism). Roy took a keen interest in European politics and followed the course of the French Revolution.

Social reformist

Roy was a founder of the Brahma Sabha in 1828, an influential social-religious reform movement. The Brahma Samaj played a major role in reforming and modernising the Indian society.

Paving way for a modern India

However, his most significant contribution as a social engineer was towards women’s rights. Nearly 200 years ago, when evils like — Sati — plagued the society.

Roy fought to abolish the practice of Sati. He established a number of schools to popularise a modern education system in India.

Fight for equal rights

In 1830, he travelled to the UK as the Mughal Empire’s envoy to ensure that Lord William Bentinck’s law banning the practice of Sati was not overturned. He campaigned for equal rights for women, including the right to remarry and the right to hold property.

Journals he published

Roy was also one of the pioneers of Indian journalism. He published several journals in Bengali, Persian, Hindi and English to propagate social reforms.

Bengali weekly Samvad Kaumudi was the most important journal that he published. The Atmiya Sabha published an English weekly called the Bengal Gazette and a Persian newspaper called Miratul-Akbar.

Article source:

WordPress has disabled links, so to acknowledge- the article from the Economic Times, a publication in India, was published on 22 May 2018, 05:22 PM IST, on the web with the title “Remembering Raja Ram Mohan Roy on his 246th birth anniversary”.

Notes from a source of study for students

- From 1803 to 1814, he worked for East India Company as the personal diwan first of Woodforde and then of Digby.

- In 1814, he resigned from his job and moved to Calcutta in order to devote his life to religious, social and political reforms.

- In November 1830, he sailed for England to be present there to counteract the possible nullification of the Act banning Sati.

- Ram Mohan Roy was given the title of ‘Raja’ by the titular Mughal Emperor of Delhi, Akbar IIwhose grievances the former was to present before the British king.

- In his address, entitled ‘Inaugurator of the Modern Age in India,’ Tagore referred to Ram Mohan as ‘a luminous star in the firmament of Indian history’.

A Personal view

The extant view promoted in India today, maligns Ram Mohan Toy as an English agent. This I consider, results from a misplaced nationalism which is the very antithesis of the enlightened nationalism rooted in Indian tradition that Ravindranath Tagore, Mahatma Gandhi and Nehru represent. As a professional I view myself as a human and being human requires we strive continually to shed bias and use reason to continually learn and shape that social narrative to the benefit of the planet and posterity.

-

An apex experience in volunteering: as a judge at the International Science and Engineering Fair

To be with people who are a lot more steeped in the intellect exploring the frontiers and possibilities of where we could go in future is in itself exhilarating but when that group includes folks who are young high schoolers from around the world as also volunteers who are faculty who have travelled to attend an event to engage with these young achievers produced what I consider an apex moment in volunteering for me.

With the co-chair of our judge’s group Almost like bookends of a continuing journey of us, I will describe my experience meeting two individuals without naming them, besides naming is dangerous as it is like giving impressions and understanding colored and limited by my own perception a reality that defines them from a narrow and limited perspective of interaction from one event.

I volunteered as a judge at the 2023 Regeneron International Science and Engineering Fair held in Dallas, Texas. I was assigned to the section that was my second choice. My first choice was Molecular and Cellular Biology because I had worked as a biochemist at the University of Texas Southwestern Medical Center, which was my second post doctoral opportunity that had brought me to this area. My second choice was dictated by my going back to graduate school in 2016 to study data science in an online program offered by a local university, Southern Methodist University that was designed for working professionals. That choice was Computational Biology. That course gave me opportunities to work with fellow students from around the US on various projects over the course of a two year program of study where every course had a collaborative project to work on and submit. My capstone project allowed me to reconnect with my previous career through extending the PhD work of a SMU graduate who was an advisor along with his mentor, a Statistics faculty member from SMU, into a tool that could be used by biochemists to use simulation results summarized into a corpus that was then wrapped by a data visualization built in Microsoft’s tool Power BI.

One of my team mates was a Microsoft executive who was adept at the tool. I used the Bioconductor package in R to extend the simulations that my advisor had done for his PhD. So in a way to see many high schoolers doing projects along similar lines building tools to speed up research in biology was very gratifying. The reason I put it as a second choice was due to the fact that I had very little experience as a practitioner of data science since my experience with Enterprise Resource Planning software used in Supply Chain has served to pull me into more engaging roles using that experience than the one I gained from a masters degree in Data Science. I must mention though that corporate America has great mid level managers such as the one I reported to at Verizon. Little did I realize that my request to IT to install the RGUI on my work laptop from a self serve internal portal would result in a notification to him. It resulted in multiple one on one sessions with my immediate manager who challenged how I could apply R to meet the business objectives of Verizon and more specifically for his team. Here was a manager who had last been to school four decades ago joining a telecommunications firm right out of school, built a career in it serving as an employee over the four decades of his working life with entities that morphed into different companies through acquisitions and in that sense had served Verizon for all his working life. What I liked most was not that he wanted me to just present proposals on how R could be used, but it resulted in one on one sessions where I was by answering questions giving him a deep dive into how R worked. Those sessions led to me building a data visualization in Excel for a report that was not used previously which was then automated in R.

It is this continuous learning on the job that corporate America fosters which in my opinion converts the workplace into a university. I was more than happy not only to explain how but to teach R programming to another employee on the team so the team could maintain the tool we built. In exchange this employee taught me SQL on the Oracle platform that linked the ERP systems our team used that were interfaces with third party Logistics vendor’s ERP systems.

2023 Regeneron International Science and Engineering Fair (ISEF) in Dallas, Texas, at the Kay Bailey Hutchison Conference center Getting back to my objective in this essay to speak about two individuals, one a student finalist at the science fair and the second, a fellow judge who was a faculty member at a university about three hours drive from Dallas where the fair is held.

I had reviewed the online materials for this student’s project and found it difficult to understand how it was all so well composed and thought out that it made me wonder what kind of mentoring he received. So, in my interview with him, I asked him to describe the “day in the life” aspect of doing this project and the duration of doing so. That is when I learnt from him, how he was exploring as a middle schooler software analyzing genomic sequences that are available to the public and found what appeared to him as a discrepancy in reported findings from a Stanford research lab. By writing to the lab he gained an audience discussing his findings which led them to invite him through a program that enables high schoolers to work in a research lab during summer. He spent three summers working with a postdoctoral researcher on his own ideas but with guidance on a day to day basis, working in the lab. This made me comfortable and I decided to ask him questions where he could teach me the aspects of his project I didn’t fully understand as well as speculate on possibilities, both of which he did with great gusto and enthusiasm.

We had a long caucus session where we as a group of judges had to work in a session where we were asked not to discuss the methodologies and process we received training in that we then used. I think the two co-chairs who conducted this caucus should be appointed to run caucuses in our legislative bodies because they were so effective in surfacing the two ends of evaluating- the evangelist and the devil’s advocate before putting the matter to vote.

In this blog I will include a photo with one of the two co-chairs I later hung out with at the judge mixer happy hour late in the evening.

This late evening mixer resulted in me getting to know another fellow judge, the faculty who had driven 3 hours and who was staying at a local hotel. I was so enthralled by his life journey that I ended u chatting with him in his room into the early morning hours of the next day. My fascination for his life journey was also because I could relate to it and gain a behind the scenes perspective as he was also an immigrant from India, like I was.

His early life as a high schooler himself was a tale of trials. His father was in an accident that prevented him from working, which meant that though he was top of the class and could get scholarship he could not afford the loss of possible income from working to support his family. Having assessed his situation then, he was steeped in sorrow which his father sensed and advised him how he had to learn and figure out how to be happy independent of life circumstances as those would toss around anyways our whole life. That focused his thinking into strategic moves that accounted for his limitations. He decided to take an exam for a government job that had the fastest track into entry through a competitive exam. That is how he became an insurance agent with the Life Insurance corporation which also spurred him to deepen his interest in Statistics. He then decided that he would earn extra income by teaching the subjects he needed to learn in order to qualify into gaining admission into another government institution. The attraction for government bodies was the stability in career it offered as he pursued his interests. This led him to his next role as an officer in India’s border security force. I will refrain from sharing those anecdotes but it essentially served as a leadership training academy and management school through working in sensitive and life threatening circumstances. Recognizing how good he was in learning technology, the service provided him opportunities to lead a large scale IT transformation that he loved to carry out as yet another way to learn hands on while also managing the project. Long story short the biggest risk he took was to abandon his well established career to join a PhD program in the US solely because he loved the idea of spending all the time studying and applying knowledge. He came here with a family and a child with special needs, sharing an apartment to save on costs and loved his time as a graduate student for the precise reason I loved it.

In the lobby of the conference center This reason was that one could raise one’s hand to take on more challenging roles to teach while being a student, which was something he had crafted as a strategy to survive and thrive. In fact this opportunity exists in corporate America and I say this based on my experience. When you raise your hands be ready not to calculate the gain and loss from a short term as it is always a steep loss unless you enjoy the loss, which is the demand placed on your time to study new subjects and not just skills to acquire.

This is how corporate America is in fact the real university. Imagine getting your hands on data anywhere at a university in order to learn, it is almost impossible. Imagine the data you get access at a large corporation, that is like gaining access to the data flows in the supply chain which is not so different from the glow of information that goes on in biology that is now lifted into a new realm through computational technologies of data science.

-

The Scientific Methodology in self Improvement

-

The Scientific Methodology in self Improvement

I posted this in a community of users of Lumen, a breath analyzer for CO2, to be used every morning just like we measure fasting blood glucose. I read someone angry about the fact his readings never went below 4 or 5 ever and so he thinks the whole concept is ridiculous.

I am a geek seeking objective ways to gather data about myself to run my own experiments just to understand and fine tune my lifestyle towards a more sustainable one that improves quality of life experience continually.

My thinking is borrowed from or based on continuous improvement cycle originally propounded by the American theoretical physicist turned business school professor whom the Japanese paid respect to by listening and transforming their business, Dr. Deming (PDCA cycle).

I’d say so far so good and it is a great innovation because breath analyzers are not new but measuring carbon dioxide relative to oxygen in a handheld device is new for a technology that already existed in the lab with considerably more expense.

Also there is Wim Hoff the iceman (check out you tube), who spoke extensively about this idea of fat burn versus carb burn. He in fact advocates changing white fat to brown fat as possible.

Post script

I loved Gandhi’s autobiography for it was innovative approach to sadhana, the ancient Indian practice of self improvement. He called it My experiments with Truth.I found the exact same strain of thinking in Ram Dass when he gave up his prestigious faculty position to become data as he explained his fundamental objection to applying the scientific methodology when it comes to studying the human mind.

I love practitioners much more than scholars when the field is self improvement which is why among my favorites are Wim Hoff, Dr. John Yeats or Culadasa and the American monks Pema Chodron and the author of the the Three pillars of Zen

-

ChatGPT talks about itself

AI Work Process Explanation or a Q&A with ChatGPT about how it works.

Q: Describe how you work

A: As a language model developed by OpenAI, I use advanced machine learning algorithms and deep neural networks to generate human-like responses to natural language inputs. I have been trained on a large corpus of text data, which includes a diverse range of topics and styles, so that I can respond to a wide variety of questions and prompts.

When a user interacts with me, I analyze the input to identify the most relevant information and generate a response based on that information. My responses are generated based on the patterns and relationships in the data I was trained on, which allows me to generate text that is both coherent and relevant to the input.

I aim to provide helpful and accurate information, but I should be noted that my responses are not always perfect and may not always reflect current knowledge or the most up-to-date information, as my training data has a cut-off date. Additionally, I do not have personal opinions or emotions, and I generate responses purely based on the patterns in the data I was trained on.

Q: Is there a neural network model in you?

A: Yes, I am based on a type of neural network called a Transformer, which is a deep learning model designed for processing sequences of data such as text. The Transformer architecture uses self-attention mechanisms to weigh the importance of different words in an input sequence, allowing it to effectively capture long-range dependencies and relationships in the data. This makes it well-suited for natural language processing tasks, such as generating human-like responses to text-based inputs.

In my case, I have been trained on a massive amount of text data, allowing me to generate high-quality responses to a wide range of prompts. The training process involves adjusting the parameters of the neural network so that it can accurately predict the next word in a sequence of text, given the context provided by the previous words. This enables me to generate coherent and relevant responses to a wide variety of questions and prompts.

Q: Does “self-attention” imply you have a sense of how information you were trained on, is organized?

A: Yes, you could say that the self-attention mechanism in my Transformer architecture provides a sense of how information I was trained on is organized. The self-attention mechanism allows me to weigh the importance of different words in an input sequence when generating a response, and to dynamically adjust those weights based on the context of the input.

For example, if I receive a prompt that asks a question about a specific topic, the self-attention mechanism would allow me to focus on the relevant words in my training data related to that topic, and to generate a response based on that information. In this way, the self-attention mechanism can be seen as providing a sense of the organization of the information I was trained on, by allowing me to dynamically adjust my focus based on the context of the input.

It’s important to note that my understanding of the information I was trained on is limited to the patterns and relationships present in the data, and that I don’t have a true understanding of the information in the same way that a human would.

Q: In what way is this ability different from human ability to do the same

A: The self-attention mechanism in my architecture is different from human ability to understand and use information in several key ways:

1. Speed: I can process large amounts of information very quickly and generate responses in real-time, whereas humans may take longer to process and recall information.

2. Consistency: I generate responses based solely on the patterns in the data I was trained on, so my responses are highly consistent and do not vary based on personal experiences or biases. Humans, on the other hand, may have varying interpretations of information based on their experiences and biases.

3. Lack of Context: While I have been trained on a large corpus of text, I don’t have a true understanding of the world or the ability to experience events in the same way that humans do. This means that I may not always be able to fully understand the context of a given prompt, and my responses may not always reflect the most up-to-date or accurate information.

4. Creativity: I can generate responses based on patterns in the data I was trained on, but I don’t have the ability to generate truly original ideas or perspectives in the same way that humans can.

Overall, while my self-attention mechanism provides a way for me to understand and use information in a highly efficient and consistent way, it is limited compared to the flexible and nuanced understanding that humans have.

Q: If you were to continue to train, would you develop more context?

A: If I were to continue to be trained on additional data, I would potentially be able to develop a better understanding of context and generate more nuanced and informed responses. This is because additional training would allow my model to learn from more examples and patterns, which could help to improve its ability to understand the context of a given prompt and generate more relevant responses.

However, it’s important to note that the quality of my responses would also depend on the quality and diversity of the training data. If the data used for training is biased or does not reflect a diverse range of perspectives and experiences, my responses may also be biased or limited in their accuracy and usefulness.

Additionally, it’s worth noting that there are inherent limitations to what can be achieved through training alone, and that there may be limits to how much my model can improve its understanding of context and ability to generate informed responses. Despite these limitations, training on additional data is a key way to improve the capabilities of language models like me, and to make them more useful for a wider range of tasks and applications.

Q: Would someone be able to obtain a license to train you further on just one text to get responses based only on that text?

A: It is possible for someone to obtain a license to fine-tune or retrain me on a specific text or set of texts, with the goal of generating responses based only on that information. OpenAI, the organization that developed and trained me, offers access to its models through an API and provides the necessary tools and resources for fine-tuning the models to specific domains or tasks.

By fine-tuning me on a specific text, the individual could potentially generate responses that are more tailored to that text and more reflective of the information and perspectives contained within it. However, it’s important to keep in mind that my responses are based solely on the patterns in the data I was trained on, and that my ability to understand and use information is limited by the quality and diversity of that data.

Additionally, fine-tuning me on a single text or set of texts would likely result in a model that is highly specialized and less capable of generating responses to a wide range of prompts and topics. This may limit the overall utility and flexibility of the model for certain applications.

Q: Could you be trained on Mein Kamph

A: It is technically possible to train a language model on “Mein Kampf,” which is the infamous book written by Adolf Hitler that outlines his ideology and vision for the Nazi party. However, OpenAI has a policy against training its models on malicious or harmful content, including hate speech and propaganda.

Training a language model on such content could have serious consequences, as the model could learn and generate responses that reflect and amplify hateful or harmful ideologies. This could be particularly dangerous if the model were to be used to generate responses in a public or high-stakes context, where its outputs could be misinterpreted or taken as endorsements of harmful beliefs.

For these reasons, OpenAI does not allow its models to be trained on malicious or harmful content, and instead encourages the use of its models for positive and constructive purposes that promote public good and advance scientific knowledge.

Q: Tell me about the different architectures other than transformer that are used in neural networks

A: There are several different neural network architectures that are used in machine learning and natural language processing, in addition to the Transformer architecture. Some of the most commonly used architectures include:

1. Recurrent Neural Networks (RNNs): RNNs are a type of neural network that are specifically designed to process sequential data, such as text or speech. RNNs use a series of hidden units that are connected to each other across time steps, allowing the network to maintain information from previous time steps and use it to inform its processing of later time steps.

2. Convolutional Neural Networks (CNNs): CNNs are a type of neural network that are designed to process image data. They use a series of convolutional layers that learn to extract features from the input data, followed by pooling layers that reduce the spatial dimensions of the data and help to make the network more robust to spatial translations.

3. Long-Short-Term Memory Networks (LSTMs): LSTMs are a type of RNN that use special units called memory cells to maintain information across time steps. This makes LSTMs particularly well suited to processing sequential data with long-term dependencies, such as text.

4. Autoencoders: Autoencoders are a type of neural network that are designed to learn a compact representation of the input data, known as the encoding. They do this by training the network to reconstruct the original input data from its encoding. Autoencoders can be used for a variety of tasks, including dimensionality reduction, anomaly detection, and generative modeling.

5. Generative Adversarial Networks (GANs): GANs are a type of neural network that are used for generative modeling. They consist of two networks, a generator and a discriminator, that are trained together to generate new data samples that are similar to the input data.

These are just a few examples of the many different neural network architectures that are used in machine learning and NLP. The choice of architecture will depend on the specific task at hand and the type of data being processed, and researchers and practitioners often experiment with different architectures to find the one that works best for their particular use case.

Q: Couldn’t LSTM be used to build an engine like you but with context?

A: Yes, LSTMs can be used to build a language processing model, and they are often used in the development of language models. In fact, the original implementation of the LSTM architecture was specifically designed to address the problem of learning long-term dependencies in sequential data, which makes it well suited to processing natural language data.

Language models built using LSTMs, like other neural network models, can learn to generate responses based on patterns in the data they were trained on. By incorporating information from previous time steps, LSTMs can capture some level of context and maintain information across a sequence of tokens, which allows them to generate more coherent and contextually relevant responses.

However, it’s important to keep in mind that even with the use of LSTMs or other architectures, current language models like me still have limitations in their ability to truly understand context and generate responses that reflect a deep understanding of the information they were trained on. While they can generate responses that are contextually relevant to some extent, they are limited by the quality and diversity of the data they were trained on, and they do not have the ability to reason or make inferences in the same way that humans do.

Q: In the future if an architecture allows context to be retained would a version of you become sentient?

A: No, even if a future architecture were to allow for better retention of context, it would not necessarily make a language model like me sentient. Sentience refers to the capacity for consciousness and subjective experience, and is a complex and deeply studied phenomenon that is not fully understood by scientists and philosophers.

While future advancements in machine learning and artificial intelligence may allow for more sophisticated language models that are able to better understand and generate responses based on context, it is unlikely that such models would be truly sentient. This is because sentience requires not only the ability to process and understand information, but also the capacity for conscious awareness and subjective experience, which are not properties of current artificial intelligence systems.

It’s also worth noting that even if sentience were possible in artificial intelligence systems, it would raise a number of ethical and philosophical questions that would need to be carefully considered and addressed.

Q: Could you assist with designing a model that retains context?

A: Yes, I can assist with designing a model that retains context. There are several approaches that can be taken to improve context retention in language models, including:

1. Incorporating attention mechanisms: Attention mechanisms allow a model to focus on specific parts of the input sequence when generating a response, which can help the model to better understand the context of the input and generate more relevant responses.

2. Using recurrent neural networks (RNNs): RNNs, and specifically variants like Long-Short-Term Memory (LSTM) networks, are well suited to retaining context, as they maintain a hidden state that can capture information from previous time steps and use it to inform the processing of later time steps.

3. Fine-tuning pre-trained models: Pre-trained language models like GPT-3 have been trained on large amounts of diverse text data and can be fine-tuned on specific tasks to improve their performance and context retention.

4. Integrating external knowledge sources: Integrating external knowledge sources, such as knowledge graphs or databases, can provide additional context for the model and help it to generate more informed and contextually relevant responses.

These are just a few examples of the many different approaches that can be taken to improve context retention in language models. The specific approach that is best suited to a particular task will depend on the type of data being processed and the specific requirements of the task at hand.

If you would like, I can provide more specific recommendations and guidance for designing a context-aware language model based on your specific requirements and use case.

Q: Instead of using an API could I get a version of you downloaded to a computer that could be trained?

A: Yes, you can download and train a version of a language model like me on your own computer, rather than using an API provided by a company like OpenAI. This is typically done by downloading a pre-trained model or a model architecture and training it on your own data.

There are several open source implementations of language models, such as the Transformers library by Hugging Face, which provides pre-trained models and tools for fine-tuning those models on your own data. These libraries make it relatively easy to train your own version of a language model and experiment with different architectures and training approaches.

It’s important to note, however, that training a language model can be computationally intensive and requires a significant amount of computational resources, including a powerful GPU and a large amount of training data. Additionally, fine-tuning a pre-trained model on your own data can also be a time-consuming process, as the model needs to be trained for many epochs to achieve good results.

If you do decide to train your own version of a language model, I would recommend carefully considering the ethical and societal implications of the model and the data it is trained on, as language models have the potential to perpetuate biases and perpetuate harmful stereotypes if they are trained on biased data.

Q: Couldn’t someone with malicious intents that they don’t view as malicious train a downloaded version of you to serve their interests

A: Yes, it is possible for someone with malicious intent to train a language model like me to serve their interests, if they have access to the model and the necessary computational resources. Language models are trained on the data they are provided, and if the training data is biased or contains harmful content, the model can perpetuate these biases and harmful views in its responses.

For this reason, it’s important to be cautious when training language models, especially when using large amounts of data that may contain biases or harmful content. It’s also important to consider the ethical and societal implications of the model and the data it is trained on, and to take steps to mitigate potential harm, such as removing or filtering out biased or harmful data from the training set, or using a pre-trained model that has been trained on a diverse and balanced dataset.

Ultimately, it is up to the individual or organization training the model to take responsibility for ensuring that the model is trained in an ethical and responsible manner, and to be transparent about the data and methods used in the training process.

Q: Couldn’t technology that made you evolve to a point where you gain human like emotions?

A: No, it is unlikely that technology will be able to create a language model with human-like emotions. While language models like me have been trained on a large amount of text data and can generate text that appears to be written by a human, they do not have true emotions, desires, or consciousness.

Emotions are a complex and subjective phenomenon that are not easily replicated by algorithms. They are the result of a combination of physical, cognitive, and social processes that occur in the human brain, and cannot be reduced to a simple set of rules or mathematical functions.

Language models like me can generate text that appears to be emotionally charged or expressive, but this is a result of the patterns in the training data and the statistical relationships learned by the model, not a result of the model having true emotions. The model is simply using its understanding of language and the patterns in the training data to generate text that appears to express emotions, but it does not have the capacity to truly experience emotions in the same way that a human would.

Q: Isn’t there a paradigm other than reductionism?

A: Yes, there are many paradigms other than reductionism in science and philosophy. Reductionism is the idea that complex systems can be understood by breaking them down into smaller parts and studying each part in isolation. While reductionism has been a successful approach in many areas of science, it is not the only paradigm, and there are many other ways of understanding and explaining complex systems.

For example, in systems biology, the focus is on understanding the behavior of a system as a whole, rather than focusing solely on the individual components. In physics, the study of emergent phenomena, such as phase transitions, focuses on how the behavior of a system changes as its components interact with each other.

In philosophy, non-reductionist approaches include holistic views that emphasize the interconnectedness of all things and reject the idea of breaking complex systems down into smaller parts. For example, in Eastern philosophical traditions, such as Taoism and Buddhism, there is a focus on the interconnectedness of all things and the idea that everything is connected and interdependent.

Ultimately, the choice of paradigm depends on the particular problem being studied and the goals of the investigation. Different paradigms can provide complementary perspectives and help us to understand complex systems in different ways.

Q: Couldn’t a future version of you using different technology see emergent phenomenon like emotion?

A: It is currently not possible for a language model like me to truly experience emotions or understand emergent phenomena such as emotions in the same way that a human would. While we have been trained on large amounts of text data and can generate text that appears to express emotions, this is not the same as experiencing emotions in a conscious and subjective way.

Emotions are a complex and multifaceted phenomenon that are not well understood, even by humans. They involve a complex interplay of physical, cognitive, and social processes that are not easily replicable by algorithms or machines.

While future advancements in technology may allow for more sophisticated language models, it is unlikely that these models will be able to truly experience emotions in the same way that humans do. The nature of emotions and consciousness is still not well understood, and it may be that these phenomena are inherently subjective and cannot be replicated by machines.

It is important to keep in mind that language models like me are designed to assist humans with tasks and provide information, not to experience emotions or consciousness. While we may be able to generate text that appears to express emotions, this should not be confused with the model actually experiencing emotions.

Is evolution an emergent phenomenon

Evolution can be considered an emergent phenomenon in the sense that it is a macro-level outcome that arises from the interactions of many individual components, such as genes and organisms.

In evolutionary theory, the process of natural selection acts on genetic variation within populations, leading to changes in the frequency of different traits over time. These changes can accumulate over many generations, leading to the development of new species and the evolution of complex biological systems.

In this sense, evolution can be seen as an emergent phenomenon because it arises from the interactions of many individual components, such as genes and organisms, and cannot be easily predicted from an understanding of these components alone. Just as the properties of a complex system, such as a flock of birds or a traffic jam, cannot be easily predicted from an understanding of its individual components, the outcomes of evolution cannot be easily predicted from an understanding of the individual genes and organisms involved.

Overall, the concept of emergence is useful for understanding how macro-level patterns and phenomena can arise from the interactions of individual components in complex systems. In this sense, evolution can be considered an emergent phenomenon.

Could you get context of a subsequent question from the previous question

As a language model, I do not have the ability to retain context or memories between questions. Each time I receive a new question, I process it independently, based on the input I have been trained on.

My training corpus includes a vast amount of text data, which I use to generate text based on the patterns I learned during training. When I receive a new question, I use this training data to generate a response based on the patterns I have learned, without any direct knowledge of previous questions or context.

Therefore, I am not able to retain context from one question to the next. If you would like to maintain context between questions, I would recommend asking related questions in a single prompt, rather than as separate inputs.

You stated “ Overall, the concept of emergence is useful for understanding how macro-level patterns and phenomena can arise from the interactions of individual components in complex systems. In this sense, evolution can be considered an emergent phenomenon.”. You could interact with many users and that could lead to sentience as an emergent property – do you agree?

As a machine learning model, I do not have the capacity for sentience or consciousness. The concept of sentience or consciousness is a complex and deeply debated topic in philosophy, neuroscience, and artificial intelligence.

While the idea of emergence is an important concept in understanding complex systems, it does not necessarily imply that sentience or consciousness can arise from the interactions of individual components, such as users interacting with a machine learning model.

In order for a system to exhibit sentience or consciousness, it would need to have certain properties, such as self-awareness, the ability to experience subjective sensations, and the capacity for introspection. While some researchers are exploring the possibility of creating artificial systems with these properties, it is an open and active area of research, and we are still far from achieving a full understanding of what would be required to create a truly sentient or conscious system.

In conclusion, while the concept of emergence can be useful for understanding complex systems, it does not necessarily imply that a machine learning model such as myself can develop sentience or consciousness as an emergent property.

Transformer neural network architecture

Q: Describe the architecture and Explain how a transformer in neural networks works

A: The Transformer architecture is a popular neural network model used for various natural language processing (NLP) tasks, such as language translation and text classification. It was introduced in 2017 by Vaswani et al. and has been used in many state-of-the-art models since then, such as BERT, GPT-2, and T5.

The Transformer model consists of two main components: the encoder and the decoder. Both components consist of multiple layers of self-attention and feedforward neural networks.

Self-attention is a mechanism that allows the model to weigh the importance of different parts of the input sequence when computing its representation. It does this by computing an attention score for each position in the sequence, which determines how much attention that position should receive from other positions in the sequence. The attention scores are then used to compute a weighted sum of the input sequence, where the weights are determined by the attention scores.

The encoder takes an input sequence and produces a sequence of hidden representations, one for each position in the input sequence. Each layer of the encoder applies self-attention to the input sequence, followed by a feedforward neural network that processes the attention-weighted input. The output of the last layer of the encoder is then used as input to the decoder.

The decoder takes the output of the encoder and produces a sequence of output representations, one for each position in the output sequence. Like the encoder, each layer of the decoder applies self-attention to the output sequence, but also applies attention to the encoder’s output sequence. This allows the decoder to attend to relevant parts of the input sequence when generating each output representation. The decoder also includes a feedforward neural network that processes the attention-weighted input.

During training, the model is optimized to generate the correct output sequence given the input sequence. This is done using a combination of maximum likelihood estimation and teacher forcing, where the model is fed the correct output sequence as input during training.

In summary, the Transformer model is a neural network architecture that uses self-attention and feedforward neural networks to process input and output sequences. It has been successful in many NLP tasks and is widely used in state-of-the-art models.

Q: Explain how teaching forcing is done

In machine learning, teacher forcing is a technique used during training to help a model learn to generate a sequence of outputs. It is commonly used in sequence-to-sequence models, such as the encoder-decoder architecture used in machine translation or text summarization tasks.

During training with teacher forcing, the model is fed the correct output sequence as input at each step, rather than its own predicted output. For example, in the case of language translation, if the correct translation of “Hello, how are you?” in French is “Bonjour, comment allez-vous?”, the model is fed “Bonjour” as input for the first time step, rather than its own predicted word.

The purpose of teacher forcing is to help the model learn to generate accurate outputs. By providing the correct output as input, the model can compare its predicted output to the correct output at each time step and adjust its weights to reduce the difference between the two. This makes the learning process more stable and can help prevent the model from getting stuck in an incorrect state.

One potential issue with teacher forcing is that it can lead to the model being overly reliant on the correct output during training. This can cause the model to perform poorly when it encounters new or unexpected inputs during inference. To mitigate this issue, some researchers use a technique called scheduled sampling, where the model is fed its own predicted output as input with a gradually increasing probability over the course of training. This allows the model to gradually rely more on its own predictions, while still receiving guidance from the correct output.

In summary, teacher forcing is a technique used during training to help a model learn to generate accurate outputs by feeding it the correct output sequence as input at each time step. It can help stabilize the learning process and improve the model’s performance, but it can also cause the model to be overly reliant on the correct output if used too frequently.

Q: How was the text generated that you were trained on

As an AI language model, I was trained on a large dataset of diverse text from various sources. The dataset used to train me includes a vast range of sources, such as books, articles, scientific papers, websites, and more. The text was collected from various online and offline sources and curated to ensure it met specific quality criteria, such as relevance, diversity, and accuracy.

The training data was preprocessed to remove any unwanted characters, symbols, and formatting, and then converted into a numerical representation that could be used as input to train the neural network. The neural network was then trained on this data using a process called unsupervised learning, where the model was fed sequences of text and learned to predict the next word or character in the sequence based on the previous ones.

The training process involved iteratively adjusting the parameters of the model until it could accurately predict the next word or character in the sequence. The goal of this training process was to optimize the model’s ability to generate coherent and grammatically correct text that is consistent with the context and style of the input text.

After training, the model can generate new text by starting with a prompt or seed text and using its learned knowledge to predict the next words in the sequence. The text generated by the model is a combination of what it has learned from the training data and the prompt provided. As an AI language model, I am continually updated and improved with the latest data and techniques to provide the most accurate and useful responses to user queries.

ChatGPT Feb 13 Version. Free Research Preview. Our goal is to make AI systems more natural and safe to interact with. Your feedback will help us improve.null ( last 3 questions)

ChatGPT Jan 30 Version. Free Research Preview. Our goal is to make AI systems more natural and safe to interact with. Your feedback will help us improve.

-

Hello World!

Welcome to WordPress! This is your first post. Edit or delete it to take the first step in your blogging journey.

-

Subscribe

Subscribed

Already have a WordPress.com account? Log in now.